Big Cloud fabric achieves impressive automation as a fabric/underlay by itself. The GUI is a pleasure to drive – I have found the CLI to be even easier/quicker than the GUI for most operations. A lot of the traditional fabric tasks around uplinks, LAGs and fabric interconnects are automatically taken care of. for e.g. some of the automation possible with BCF, includes:

- OS install

- Upgrade (Hitless)

- LAG creation : Note that the LAGs refered to are the ones between Spine and Leaf switches, not LAG groups from the leaf switches down to servers/compute. for that, read on about the possibilities once VMWare integration comes into play).

- Fabric formation (Zero Touch): you are not configuring any Multichassis link Aggregation, or L2/L3 protocols (Spanning Tree, OSPF) within the fabric. All of this happens automatically.

However, Once the integration with VMWare comes into play, there is a further layer of automation & visibility to supplement the above, for each of vSphere, vSAN and NSX. these include:

vSphere:

- L1 provision – Edge Link Detection and LAGs to Edge/Servers: Auto detection of links between Leaf switches and hosts, plus LAG formation

- L2 Provision – L2 network creation & VM Learning : VLAN (Segment in BCF lingo) creation, as well as host (end point) learning.

- Ops – Network policy migration for vMotion / DRS

- Ops – VM/network visibility and troubleshooting

vSAN

- L1 Provision: Auto Host Detection & LAG Formation

- L2 Provision: Auto VSAN Network Creation for VMkernel communication

- L2 or L3 multicast (caveat: vSAN 6.6 no longer uses MC)

NSX

- Fabric as a single VTEP

- Auto Transport Network Creation for VTEP

- Overlay visibility & Analytics

- VTEP-to-VTEP troubleshooting

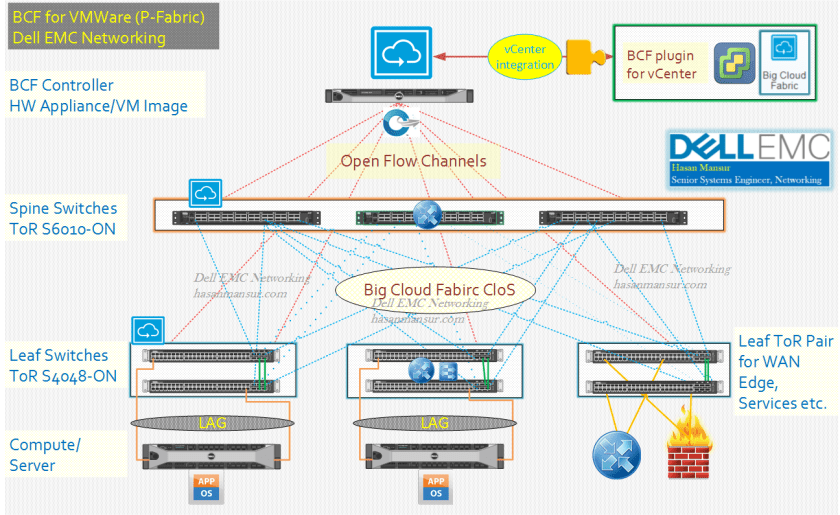

The integration with VMWare estates of vSphere, NSX and VSAN allows BCF to truly shine as the underlay of choice for the emerging workloads attributed to Software defined DC. BCF Streamlines Application Deployment by automating the physical network configuration for VMWare workloads.

- The automation drives the agility and velocity which the workloads demand of the network, and where Networking in general has been to slow to adapt until the emergence of SDN.

- Deep integration with other components of Software defined DC start to erode the traditional siloed visibility and management. BCF has a plugin for vCenter, and consumes an API provided by vCenter to enable visibility for:

- Network Admin via BCF: into the Overlay. The admin has visibility at VM/Host level, and can execute end to end (test path) trace.

- VM Admin via vCenter: into the underlay. The admin has visibility into the fabric, and can execute (test path) trace from VM to VM.

So now, both the admins can obtain visibility and troubleshooting tools which penetrate into what has traditionally been a territorial remit for the other admin.

- BCF can integrated IP Storage with vSphere deployments by having their respective interfaces in the same or different subnets/VLANs, i.e.

- IP Storage and ESXi VMkernel Interface on the same VLAN/Segment

- IP Storage and ESXi VMkernel Interface on Different VLANs

- IP Storage on Shared Tenant

Multiple vCenters can be integrated into a single BCF, with each vCenter represented as a distinct tenant. A single vCenter integrating with multiple BCF PoDs is not supported.

will continue in part 2..