In this 4th and final part, I will summarize some of the outstanding considerations to be mindful of, when creating MX7000 Network Designs.

Considerations

Topology

- Scalable Fabric (FSE + FEM) Vs. Local Switching in each Chassis (MX91 or MX51)– Scalable Fabric should be the preferred option.

- A Scalable Fabric will extend a single fabric to multiple chassis. In the absence of a scalable fabric, each chassis could be populated with switches which could offer VLT as a clustering/switch virtualization mechanism (there is no stacking available), but to span this solution to multiple chassis would require each chassis’ IOMs to uplink potentially to a ToR outside of the modular infrastructure, which serves to aggregate connectivity from all the different chassis’ IOMs. For traffic that has to travel between the chassis, within the modular infrastructure, the ToR switches now add further latency & hop. So for e.g. if we have a 5 chassis solution with fabric A only, instead of a Scalable fabric spanning 2 switches [MX91 FSEs] and 8 [Unmanaged/Virtual ports] line cards in Fabric A of Chassis 2-5, you now have 10 individual switches which will

- Run an OS/Firmware, so upgrades apply

- Have individual configuration

- Register in the spanning tree topology (not transparent)

- incur additional latency. Note that in a Scalable fabric, with FSEs and FEMs in consideration, if the traffic has to ingress an FEM, travel up to the FSE and then back down to a FEM for egress, we can work out the total based on the following:

- Each FEM adds latency of 75ns,

- FSE adds latency of 450 ns.

- DAC latency @ around 10 ns / AOC latency @ around 150-200 ns typical.

- If MX51xx is in the discussion, it has a latency of 800 ns.

OS Feature Set Availability

- Critical: Current MX OS10 implementation is based on 10.4.0. This will merge with the main OS10 train in 10.5.0. Therefore, the current Feature Support is limited to what is offered on 10.4.0.

Latency

The end to end latency for FSE/FEM combination is quite impressive, regardless of whether the source and destination are within the same chassis, or reside in different chassis within the modular infrastructure.

- MX9116FSEs & MX7116 FEMs,

- Since the FEM is not a switch, it will not provide local switching. The Lead chassis have the FSEs, which provides the switching engine and move the traffic between the ports. Once FEM is visible to FSE, it is mapped to a virtual port.

- MX9116 itself is a high performance switch, which can ofcourse provide local switching on its own.

- MX5108 Ethernet Switch, has its latency at about ~ 800 ns.

- If source/destination are in different chassis, and the path is through a Top of Rack switch, it will require the traffic to ingress first MX51, travel up to a ToR switch and then come back down to the MX51 which egresses to the destination.

Storage

- If you need both FC and SAS, then the only solution is to use SAS switches in Storage Fabric C, and MX91xx in General purpose fabrics. However, note that since MX91 can only do FCoE to Server, you will need CNAs in the server.

- When using 32 Gb FC, the actual data rate is 28 Gbps due to 64b/66b encoding. However, the link between NPU and FC ASIC can be re-configured via a command to allow 32G. However, it results in a decrease in total available bandwidth, from 200Gb to 112 Gb, due to the available lanes being 4 (@28 Gb each) instead of 8.

- As of OS10 10.4.0.R3S, FCoE and LAN traffic must be carried on physically separate uplinks.

- FC & FCoE Connectivity Summary

- MX9116n: Servers connect via FCoE. Switch can uplink

- FCoE,

- provide NPV gateway to traditional Brocade/MDS FC SAN, or

- support direct attach of a FC storage array, with zoning performed on the switch

- MX5108n: Servers connect via FCoE. Switch can uplink

- FCoE to SAN gateway switch such as S4148U

- Currently, only the S4148U can be used as the NPG device.

- MXG610s: Servers have native FC HBA connected to FC switch. Switch can

- connect to traditional FC SAN, or

- support direct attach of a FC storage array, with zoning performed on the switch.

- MX9116n: Servers connect via FCoE. Switch can uplink

|

|

FC |

FCoE |

|

MX9116 |

External : Direct Attach + SAN NPIV |

Internal Must + External optional |

|

MX5108 |

None |

Internal Must + External Must |

|

MXG610 |

Internal + [External : Direct Attach + SAN] |

None |

Configuration & Deployment Notes

Some notes on deployment and configuration.

Smart Fabric Creation

SmartFabric deployment consists of four broad steps, all completed using the OME-M console.

- Create the VLANs to be used in the fabric (if not already created)

- Select switches and create the fabric based on the physical topology desired

- Create uplinks from the fabric to the customer network, and assign networks to those uplinks

- Deploy the appropriate server templates

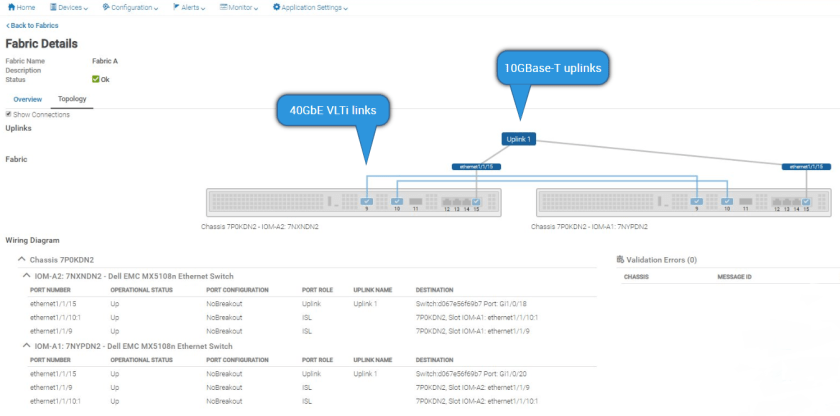

VLTi

With Smart Fabric, VLT use is imposed by the Fabric. The ports are pre-selected too, and cannot be modified by the user. In Full Switch, VLT may or may not be used.

- For the MX9116n, QSFP28-DD port groups 11 & 12 (1/1/37-40) are used.

- For the MX5108n, ports 9 & 10 are used.

- However, since port 9 is 40G, and port 10 is 100G, there is a speed mismatch, which would normally be an issue with VLT. In this case, Port 10 in Smart Fabric VLT, is enforced by the Fabric Engine to operate at 40GbE, instead of 100GbE.

- The decision to use Ports 9 & 10 instead of 10 & 11 was done to allow for a 100GbE uplink. If ports 10 & 11 were used, only a 40G uplink would be available.

Spanning Tree Considerations:

SmartFabric mode defaults to RPVST+

By default, OS10EE uses RPVST+. OS10EE also supports RSTP and Multiple Spanning Tree (MST). AS of 10.4.0R3, Smart Fabric Mode does not support MST. Regardless of Operating Mode, MST cannot be enabled in conjunction with VLT. With VLT, the only options are RSTP and RPVST.

RPVST+ works fine when the number of VLANs is small. This typically works out to 48 VLANs. Where the number of required VLANs exceeds 48, Dell EMC recommends using RSTP (Mono/Single Instance). This can be configured in either SmartFabric or Full Switch mode.

When connecting OS10 RPVST+ instance with another network which support a single/Mono STP instance (e.g. RSTP only), RPVST would bridge through a mono instance of CST on default vlan 1. For non-native VLANs, all BPDU traffic is tagged and forwarded in that particular VLAN. These BPDUs are sent to a protocol specific multicast address.

VLANs

- VLANs 4001 to 4020 are reserved for internal switch communication and cannot be assigned to an interface.

- In SmartFabric mode, although you can use the CLI to create VLANs 1 to 4000 and 4021 to 4094, you cannot assign interfaces to them. For this reason, do not use the CLI to create VLANs in SmartFabric mode.

Default VLAN

- In Full Switch mode, all switch interfaces are assigned to VLAN 1 by default.

- In SmartFabric mode, no interfaces are assigned to a default VLAN – you must manually configure member interfaces in the VLAN the user chooses to be the default VLAN. The default VLAN must be created, for any untagged traffic to cross the fabric.

NPAR

- If NPAR is not in use, both switch dependent (LACP) and switch independent teaming methods are supported.

- If NPAR is in use, only switch independent teaming methods are supported. Switch dependent teaming is NOT supported. This is in context of southbound links to Servers. It does not apply to Northbound links to the Core, which can use LAG method of choice.

Conclusion

In time, I might commit further posts focused on MX7000 Networking, to the blog. However, I do have another topic in mind which I intend to start focusing on, over the coming weeks.

Nice series of articles related to MX7000.

Very interesting!!!

Thanks a lot

Thanks !

This was very informative. Can you confirm if the pair of mx5108n in a single chassis and setup as a smartfabric functions like a vpc/mlag pair of switches to the upstream? What I’ve noticed is that lacp pdu is only being detected from one IOM and not the other when setup as a fabric from the vpc nexus pair its up linked to. I noticed in the docs with my setup it’s only being shown uplinking to one upstream switch.

Hi John

Thanks, and yes indeed. the pair of MX51s should be a VLT pair, similar to an MLAG/vPC pair. you should have active active multi-pathing from both northbound links to the Nexus. If unable to resolve, reach out to support and let them have a look.

all the best,

Hasan

Hello Hassan,

Really good article about the MX7000 networking, good point on Design consideration,

cheers*Mostapha

Thanks for sharing all of this! Even if I’m not technically skilled as you, and did not understand all the implication of this, it has been an interesting read since at work the bosses are evaluating the purchase…

You are welcome, thanks for the appreciation @

Hi Hasan,

Thanks for sharing these details. Its really helpful. Can you also help in clarifying how PVLANs can be configured in Smart Fabric Mode. We have a requirement to run PVLANs and FSE9116 is configured in Smart Mode and I am not seeing any option to define pvlans.

Thanks for your help.

Regards,

Ajit

Hi Ajit,

I believe you might have to go Full Switch Mode to configure PVLANs.

Hasan